Why Centroids Appear in k-Means:

From an NP-Hard Problem to a Simple Heuristic

2026-01-19

★★★☆☆

20 min

Many of you are probably very familiar with the K-means algorithm (some of you probably because I described it in my book, which is available below).

I won't bore you with an introduction to this classic, popular, and very often used method in practice. In this post, we will focus on a certain detail that is extremely important, yet every source overlooks this crucial part. Personally, I haven't yet found a book that explains the topic I'm going to discuss today in a way that satisfies me, and I believe that anyone working in machine learning should know this.

The Mysterious Equation

Let's start with an extremely simple question:Why is the heuristic algorithm for solving the K-means problem based on centroids?

But first, let's recall what centroids are, so there are no unnecessary misunderstandings and so that we are using the same definitions.

Let' assume:

• \(X = \lbrace x_1,\dots,x_n \rbrace \subset \mathbb{R}^p\) be the set of observations,

• \(x_i = (x_{i1},\dots,x_{ip})\),

• \(C_k \subset \lbrace1,\dots,n\rbrace\) be the set of indices of points assigned to cluster \(k\),

• \(|C_k|\) denote the number of observations in cluster \(k\).

Then the centroid of cluster \(k\) is the vector

$$ \bar{x}_k = (\bar{x}_{k1},\dots,\bar{x}_{kp}) \in \mathbb{R}^p $$

with coordinates

$$

\bar{x}_{kj}

=

\frac{1}{|C_k|}

\sum_{i \in C_k} x_{ij},

\qquad j = 1,\dots,p.

$$

Let's return to the question: Why does K-means use centroids?

To answer this question, we need to take a step back and remember that K-means is an optimization problem. This means that it aims to find a solution that minimizes a certain objective function. In the case of K-means, the objective function is the sum of the squared distances between all pairs of points across all clusters, that is $$ \sum_{k=1}^{K}\frac{1}{|C_k|}\sum_{i,i'\in C_k}\sum_{j=1}^{p}(x_{ij}-x_{i'j})^2 $$ This equation exactly captures the essence of the K-means method. We want to divide a set of points into groups so that points that are close to each other are in the same cluster. But nowhere here does the concept of centroids appear. So where do they come from in the K-means algorithm?

When I was reading books describing the principles of K-means (as is the case, for example, in the popular "An Introduction to Statistical Learning..."), the only thing I came across was a certain equality left unexplained: $$ \frac{1}{|C_k|}\sum_{i,i'\in C_k}\sum_{j=1}^{p}(x_{ij}-x_{i'j})^2=2\sum_{i\in C_k}\sum_{j=1}^{p}(x_{ij}-\overline{x}_{kj})^2 $$ Which we can read as:

The average of the sum of squared distances over all ordered pairs of points in a cluster equals twice the sum of squared deviations of points from the cluster centroid.

This seems quite intuitive, since the centroid "centers" all the points, but although intuition is of course important, in mathematics we still need rigorous proofs.

Now in our equation centroids appear explicitly. However, I haven't found an explanation anywhere of why this equality holds. Don't you think this is quite an important step?

Exactly! That's why we will break this equality down into its basic components and show where it comes from.

Warm up

Let us emphasize an obvious but important fact: since the dataset contains a finite number of observations, all the sums appearing in the equation are finite sums. Therefore, we can safely apply theorems about changing the order of finite summations: $$ \sum_{i,i'} \sum_{j} a_{i,i',j} = \sum_{j} \sum_{i,i'} a_{i,i',j} $$ Note that we can pull the summation over \(j\) outside on both sides of the equality, since it is a finite sum: $$ \sum_{j=1}^{p} L_j = \sum_{j=1}^{p} R_j $$ where: $$ L_j := \frac{1}{|C_k|}\sum_{i,i'\in C_k}(x_{ij}-x_{i'j})^2 $$ $$ R_j := 2\sum_{i\in C_k}(x_{ij}-\bar x_{kj})^2 $$ Therefore, it suffices to show that for every \(j\) the following equality holds: $$ \forall j \quad L_j = R_j $$ Thanks to this, we can in a sense forget about \( j \), that is, focus on a single dimension \( j \) and prove the equality for each coordinate separately.Breaking The Equation Down

Good. Since we have already dealt with the issue of forgetting the summation over \( j \) (of course, we will return to summing over \( j \) at the end), let us now expand the left-hand side of the equation: $$ \begin{align} L_j &= \frac{1}{|C_k|}\sum_{i,i'\in C_k}(x_{ij}-x_{i'j})^2 \\ &= \frac{1}{|C_k|}\sum_{i,i'\in C_k}(x_{ij}^2 - 2x_{ij}x_{i'j} + x_{i'j}^2) \\ &= \frac{1}{|C_k|}\left( \sum_{i,i'\in C_k} x_{ij}^2 - 2\sum_{i,i'\in C_k} x_{ij}x_{i'j} + \sum_{i,i'\in C_k} x_{i'j}^2 \right) \\ \end{align} $$ Now, notice that the first sum does not depend on \( i' \): $$ \sum_{i,i'\in C_k} x_{ij}^2 = \sum_{i\in C_k} x_{ij}^2 \sum_{i'\in C_k} 1 = |C_k|\sum_{i\in C_k} x_{ij}^2 $$ Similarly the third sum: $$ \sum_{i,i'\in C_k} x_{i'j}^2 = |C_k|\sum_{i'\in C_k} x_{i'j}^2 = |C_k|\sum_{i\in C_k} x_{ij}^2 $$ where the last step follows from the fact that the indices \(i\) and \(i'\) run over the same set.Now, let us focus on the middle sum. Here, we can use the property of finite sums that allows us to change the order of summation: $$ \sum_{i,i'\in C_k} x_{ij}x_{i'j} = \left(\sum_{i\in C_k} x_{ij}\right) \left(\sum_{i'\in C_k} x_{i'j}\right) $$ Now, attention - an important trick will be needed! But before that, let us recall the definition of the centroid.

For the \( j \)-th coordinate, the centroid of cluster \( k \) is given by: $$ \bar{x}_{kj} = \frac{1}{|C_k|} \sum_{i \in C_k} x_{ij} $$ From this, we have: $$ \sum_{i \in C_k} x_{ij} = |C_k|\bar{x}_{kj} $$ Therefore: $$ \sum_{i,i'\in C_k} x_{ij}x_{i'j} = |C_k|^2 \bar x_{kj}^2 $$ Do you see now where centroids appear in the K-means algorithm? Let us substitute our results back into the expression for \( L_j \): $$ \begin{align} L_j &= \frac{1}{|C_k|}\left( \sum_{i,i'\in C_k} x_{ij}^2 - 2\sum_{i,i'\in C_k} x_{ij}x_{i'j} + \sum_{i,i'\in C_k} x_{i'j}^2 \right) \\ &= \frac{1}{|C_k|}\left( |C_k|\sum_{i\in C_k} x_{ij}^2 - 2|C_k|^2 \bar x_{kj}^2 + |C_k|\sum_{i\in C_k} x_{ij}^2 \right) \\ &= \sum_{i\in C_k} x_{ij}^2 - 2|C_k| \bar x_{kj}^2 + \sum_{i\in C_k} x_{ij}^2 \\ &= 2 \left( \sum_{i\in C_k} x_{ij}^2 - |C_k| \bar x_{kj}^2 \right) \end{align} $$

Now, let us focus on the right-hand side of the equation \( R_j \): $$ \begin{align} R_j &= 2 \sum_{i\in C_k}(x_{ij}-\bar x_{kj})^2 \\ &= 2 \left( \sum_{i\in C_k} x_{ij}^2 - 2\bar x_{kj}\sum_{i\in C_k} x_{ij} + \sum_{i\in C_k} \bar x_{kj}^2 \right) \\ &= 2 \left( \sum_{i\in C_k} x_{ij}^2 - 2\bar x_{kj}\sum_{i\in C_k} x_{ij} + |C_k| \bar x_{kj}^2 \right) \\ &= 2 \left( \sum_{i\in C_k} x_{ij}^2 - 2\bar x_{kj}|C_k|\bar x_{kj} + |C_k| \bar x_{kj}^2 \right) \\ &= 2 \left( \sum_{i\in C_k} x_{ij}^2 - 2|C_k|\bar x_{kj}^2 + |C_k| \bar x_{kj}^2 \right) \\ &= 2 \left( \sum_{i\in C_k} x_{ij}^2 - |C_k|\bar x_{kj}^2 \right) \\ \end{align} $$ Which proves that: $$ L_j = R_j $$ Summing over \( j = 1, \dots, p \), we obtain exactly our original equality: $$ \frac{1}{|C_k|}\sum_{i,i'\in C_k}\sum_{j=1}^{p}(x_{ij}-x_{i'j})^2=2\sum_{i\in C_k}\sum_{j=1}^{p}(x_{ij}-\overline{x}_{kj})^2 $$

Why do we use centroids?

Now that we have proven the equality, we can finally answer the question posed at the beginning of this post: Why does K-means use centroids?Having this equality, we can transform an NP-hard problem into an iterative approximate procedure, which in each iteration updates the centroids and cluster assignments until convergence:

- Assume some initial centroids

- Assign each point to the nearest centroid

- Recalculate the centroids as the means of the newly formed clusters

- Repeat until convergence

I described the principles of the k-means algorithm in detail in my book, in the section "Clustering Harmony: Unveiling the Power of Data Grouping with K-Means".

A Tribute To The Equality

Would we be able to effectively solve the k-means problem without this equality?No.

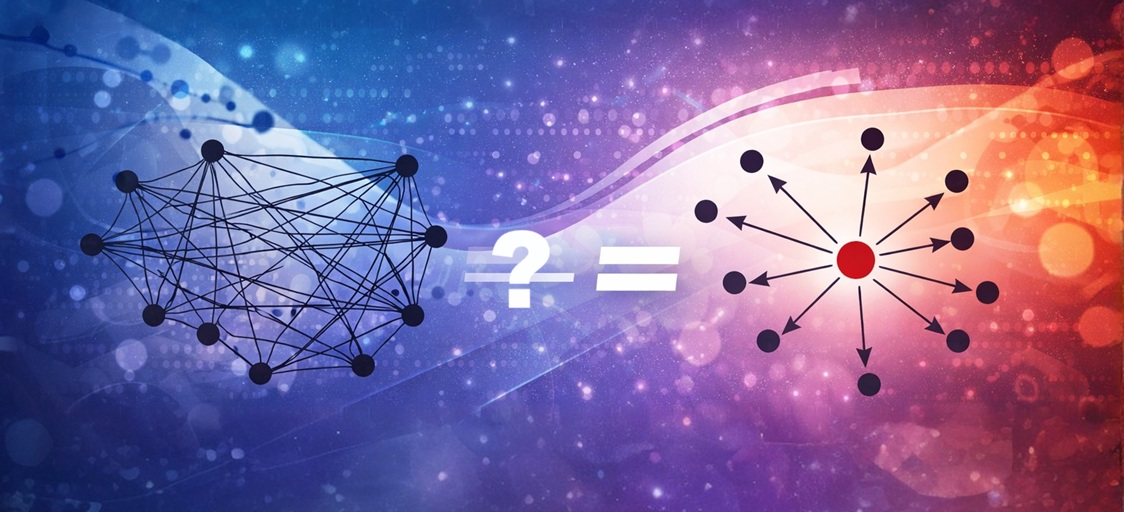

Let's take a look at a image illustrating the difference between considering all pairs of points in a cluster versus considering deviations from the centroid.

I think it's worth giving this equation the respect it deserves and understanding its significance in the context of the k-means algorithm. It is precisely thanks to this equality that we can efficiently use a heuristic algorithm to find good partitions of data points into clusters.

I think it's worth giving this equation the respect it deserves and understanding its significance in the context of the k-means algorithm. It is precisely thanks to this equality that we can efficiently use a heuristic algorithm to find good partitions of data points into clusters.Without it, we would have to consider all possible partitions of points (and the pairs of points for which we compute distances is \( n^2 )\) into clusters, which is computationally practically impossible to solve even for small datasets.

Formally, it has been proven that the k-means optimization problem is NP-hard, even for \( K=2 \) in \( \mathbb{R}^p \).

Even for \(k=2\) clusters in \(p\)-dimensional space, finding the optimal partition of points that minimizes the k-means objective function is NP-hard problem.

You can find the proof here.I hope I satisfied your curiosity and at the same time, explain how crucial this equality is.

Conclusion:

In this article, we started with a deceptively simple question: why does k-means rely on centroids?

K-means is an NP-hard optimization problem defined in terms of pairwise squared distances between points within clusters. Solving this problem directly would require examining an astronomical number of possible partitions, making exact optimization infeasible even for modest dataset sizes.

The key insight lies in the equality that transforms this pairwise objective into a sum of squared deviations from a single representative point - the centroid. This transformation is not a cosmetic rewrite. It fundamentally changes the structure of the problem.

Thanks to this equality, we can apply an efficient iterative heuristic - Lloyd's algorithm - that alternates between assigning points to the nearest centroid and updating centroids as cluster means. Each step provably decreases the objective, even though the global optimum remains out of reach in general. This is precisely why k-means works so well in practice despite its theoretical hardness.

Without this equality, there would be no principled way to justify centroid updates, no efficient surrogate objective, and no scalable algorithm. In that sense, the success of k-means is not accidental - it is a direct consequence of this mathematical structure.

Understanding this equality is therefore more than a technical detail. It explains why centroids appear in k-means, why the heuristic is meaningful, and why a fundamentally hard problem admits such a simple and effective algorithm in practice.

Happy learning! 😁

Get In Touch

info@machinelearningsensei.com

© machinelearningsensei.com. All Rights Reserved. Designed by HTML Codex